I’ve recently had a number of emails from Don of Tallahassee, describing various ways in which he thinks Voynichese can be decomposed into simpler subunits: broadly speaking, his scheme is similar to Jorge Stolfi’s well-known crust-mantle-core model, but with a very much larger base group.

Numerically, Don’s model works well: but – in my opinion – it doesn’t yet help us move towards what I would consider any of the basic milestones we would need to pass before we can crack the puzzle of Voynichese.

If anyone wants to be the Voynich Champollion, here is my list of the milestones you’ll need to tackle in your research programme, with various sample challenges. I don’t mind admitting that I haven’t yet succeeded at any of these: make no mistake, they are all hugely difficult.

(In the context of Don’s models, my opinion is that he – like many others before him, so it is in no way a criticism – has effectively skipped over the first three milestones, and gone straight for the modelling milestone. But we all need to get vastly more confident about the first three milestones before we can start doing modelling in an effective way.)

Milestone #1: Reading

Personally, I’m not convinced that we’re even reading Voynichese accurately off the page yet.

For example:

* Page-initial letters have quite a different instance frequency distribution from anything else, particularly in the Herbal pages. Why should that be?

* Line-initial letters have, again, a different instance frequency distribution as compared to text within lines. Is it therefore safe to assume that these are the same kind of text as each other?

* In 2006, I proposed that EVA ‘aiin’ characters may well represent Arabic digits, by steganographically enciphering the values using different shapes of the scribal flourish on the tail of the (‘v’-shaped) EVA ‘n’. This basic hypothesis needs to be tested microscopically and with careful imaging techniques, but my proposals to the Beinecke some years ago to do this were turned down.

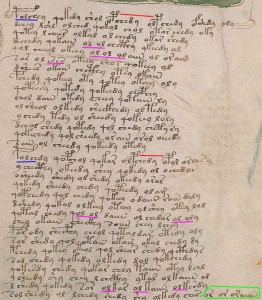

* Philip Neal has pointed to evidence that certain stylized text sequences may be quite different from the rest of the text. There are both ‘vertical Neal keys’ (down the start column of many pages) and ‘horizontal Neal keys’, which often appear about 2/3rds of the way across the top line of a page or paragraph, and often ‘bracketed’ by a pair of single-leg gallows (‘p’ or ‘f’).

* In 2006, I proposed that Neal keys might form part of a tricky in-page transposition cipher (as described briefly by Alberti in 1467), where the gallows characters might form references to within key-like sequences. But this hypothesis has not been tested any further.

Challenge: when we try to decipher Voynichese, are we even trying to decipher the right thing? When there are so many different things that each suggest that the text as a whole is not an homogenous entity, why do so many people persist in treating it as if it is a single, simple language?

Milestone #2: Parsing

The second roadblock is that we can’t yet even parse Voynichese. Because of the ambiguities and weird letters, Voynich Manuscript researchers use a stroke-based transcription called ‘EVA’: this lets us transcribe the text and talk about it, even if we disagree (or are uncertain) about how these should be parsed.

For example:

* Is ‘ch’ a unique letter or is it a ‘c’ letter followed by an ‘h’ letter?

* Is ‘ii’ a pair of ‘i’ characters or a separate character?

* Is ‘ee’ a pair of ‘e’ characters or a separate character?

* Are ‘cth’ / ‘ckh’ / ‘cfh’ / ‘cph’ actually a t/k/f/p gallows character followed or preceded by ‘ch’, or four entirely separate composite letters?

Challenge: what kind of statistical tests would help us compare multiple different candidate parsing schemata, to help us decide which ones are more likely?

Milestone #3: Tokenization

The third roadblock is that there seems strong visual evidence that characters are not the same as tokens: which is to say that some individual letters in the plaintext may map to multiple letters in the Voynichese ciphertext.

For example:

* Is ‘qo’ a token?

* Is ‘dy’ a token?

* Is ‘o’ + gallows a different kind of token to just plain gallows?

* Is ‘y’ + gallows a different kind of token to just plain gallows?

Challenge: what kind of statistical tests would help us compare multiple different candidate tokenization schemata, to help us decide which ones are most likely?

(Note that Milestones #2 and #3 overlap sharply, making the process of getting past them quadratically more difficult, in my opinion.)

Milestone #4: Modelling

Even if we get to the stage that we are able to read, parse and tokenize Voynichese with some degree of certainty, we still face many grave difficulties, not least of which is that we have at least two ‘dialects’ to solve at the same time – Currier A, Currier B, and ‘Labelese’ (for want of a better term). For each of these languages/dialects, we need to model the language functioning and use the results to understand their internal structures.

For example:

* What do the contact tables between adjacent tokens suggest?

* Can we produce Markov models for each of these ‘languages’?

* Is ‘qo’ a free-standing unit (i.e. that is only steganographically prefixed to words), or is it genuinely an integrated part of words?

Challenge: what is the mapping between Currier A, Currier B, and Labelese? Can we somehow normalize the three such that they all conform to a single unified scheme? Or are there basic differences between them such that this is impossible?