Thanks to a tip from the ever-busy Mark Knowles, here’s a recent presentation by Lisa Fagin Davis on the Voynich Manuscript to the University of Toronto’s Medieval Studies department:

She notes that the first 14 minutes (it’s about an hour and a half, including the Q&A) should be familiar to most Voynich researchers, so feel free to fast forward to there without missing anything new.

So: is there anything new?

Well (and inevitably), yes and no. The recent Yale X-ray fluorescence imaging of folio 1 (it’s an X-ray, so you see both sides of the folio) is certainly interesting. For example, it’s good to know for sure that (probably) Marci’s ink down the right-hand side of f1r (the attempted decryption column) is zinc-heavy rather than iron-heavy, and that Wilfrid Voynich used a sulphur-based reagent to try to bring out Sinapius’ (not “Tinapius”, sigh) marginalia. But that’s only really an imaging confirmation of what Voynich researchers have collectively thought for 20+ years, there hasn’t really been much disagreement around that aspect of the manuscript’s materiality.

She also reported on an ongoing project to use the receding size of the waterstains at the top of lots of the early pages to (weakly) predict the original quiration / nesting order of the bifolios. No strong results yet, but work is still ongoing. My prediction about the prediction (I looked at this topic 20 years ago): it’s too weak to really be sure, but perhaps it will produce results that can be combined with other results.

The big news, though, is what she didn’t say. I remember asking Lisa several years ago about why she – a codicologist – hadn’t taken on what I considered then (and, to be fair, still do) the Voynich Manuscript’s #1 codicological challenge, which was to reconstruct the original page/folio/bifolio order/nesting. (I recall calling this “the Everest of codicology”, for what it’s worth.) She basically slapped me down, saying that this was a waste of time, and that it would not produce any worthwhile results. I remember thinking at the time that this sounded like the worst example of codicological reasoning I’d heard for a long time. But now – mirabile dictu – she’s citing Glen Claston and me, e.g. trying to test our hypotheses about Quire 13 (Q13A and Q13B) and Quire 20 (Q20A and Q20B). So if it is a codicological rabbit hole I’ve been down for decades (since long before the Frascati meeting), I at least now have some esteemed company.

To be fair, she did invest a little bit of time in the presentation rubbishing my Curse of the Voynich reasoning that (at least) one of the bifolios in Q13 has been bound back to front (or inside out, depending on how you look at it). But because my reasoning there was flawless, I can only deduce that her evidence against it is marginal (and wrong). *laughs*

The problem with the presentation (and there is indeed a problem) is that she’s been trying to use text similarity metrics (you know, the same kind of thing that Rene was compiling 20+ years ago) to predict page adjacency, which I’m really not sure has the kind of predictive strength it would undoubtedly have when applied to Vincent of Beauvais’ Speculum, or indeed any text you can actually read and understand. We have reconstructed so little of the way the Voynich was constructed that I think this is wobbly in the extreme: were the bifolios written free-standing (i.e. one at a time), or folded into gatherings? If the text was enciphered, is the text analyis picking up encipherment artifacts or underlying text artifacts?

Really, my opinion is that while the text similarity metrics are pretty good for broadly clustering pages together, they’re extremely shaky as codicological support (if you’ll forgive the pun).

In the end, LFD’s presentation swings round to the hypothesis that maybe Q20 (and indeed many other parts of the Voynich Manuscript) was originally a bundle of “singulions” (a fairly rare term meaning ‘a single-bifolio gathering/quire, I’d personallly have preferred “singletons”), i.e. that it had no nesting structure at all. This is, of course, quite bold, but because it rests on a wobbly foundation of text similarity metrics, I’m not at all comfortable. It’s new, it’s interesting, but given its reliance on wibbly stats, is it really codicology? Personally, I think not, but it may yet point the way to future real codicology. Perhaps this is the start of something interesting, but caveat lector nonetheless (who was Hannibal’s bookish brother).

Nick’s own commentary

There are a few places in the Voynich Manuscript where we can see drawings going from one side of a bifolio to another, most famously in the balneological quire Q13, which has water flowing from one side to the other on two halves of a bifolio that it seems safe to say was probably at the middle of a gathering in the original unbound state. But if everything is singulions, this means nothing at all. Still, we can all look forward to the peer-reviewed paper on the subject. (Reviewer #2 says hi.)

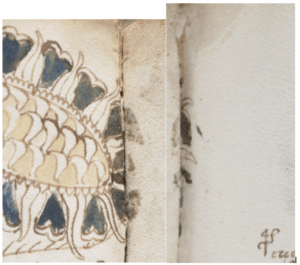

Another example that doesn’t get a lot of online love is between f33v and f40r: here you can see the drawing extending slightly over the bifolio centre (and also the ‘heavy’ blue paint leaching into the pages now bound opposite both of them). Does this mean that the f33-f40 bifolio was originally the centre of a gathering/quire, or was this just a byproduct of the way that the bifolios were (hypothetically) written unbound? This is the kind of difficulty you face when trying to do codicological reasoning to try to reduce the vast combinatorial space to something more reasonable, and progress has been slow.

Looking for contact transfers of ink or paint (and I don’t think the blue paint tells us anything useful) remains one of the few non-text-based avenues that yield anything, but without spectrography this is still difficult. For example, did the red paint mark on the top left f27r come from f53v or even from f87v, or was it just a stray drip? Multiply that uncertainty by a thousand, and only Bayesians will still be happy.

Personally, I still think that cross-referencing the DNA of the bifolios stands a good chance of massively reducing the search space, and that this is one of the few genuine routes that codicologists such as LFD should be pushing for. Of course, there’s a (tiny) chance that this will tell us nothing, but I have to say that I remain mystified that LFD remains so dead against it (and I thought her codicological reasoning there was extraordinarily suspect). Perhaps in a decade’s time she’ll join me down that rabbit hole as well, who knows?

Hey, Nick, just wanted to jump in to explain the difference between “singulion” and “singleton” – a singleton is a single leaf. A singulion is a quire made up of a single bifolium, which is what I’m talking about here.

Lisa Fagin Davis: it’s technically correct, yes, but it is nonetheless a particularly rare term, even among the many other rare terms codicologists like to use!

I’m not “dead set” against DNA testing, it’s just that there is literally nothing to compare the DNA to! We could ascertain the species of every bifolium, but that really wouldn’t tell us anything that would conclusively establish a place of origin. Someday when there is a massive database of parchment samples of a known date and place of origin (we would need MILLIONS of samples from around the world to create a useful dataset), sure, that might be useful. But 1) it’s not up to me or anyone but the Beinecke curator and conservator to decide whether to conduct those tests and 2) it’s almost certainly not going to happen, at least not anytime soon. So in the meantime, we focus on other material evidence that we CAN get at, like stains and offsets and images that cross the gutter.

Re LSA; the whole point is that LSA is truly language agnostic…the fact that WE can understand the Latin of the Speculum Humanae Salvationis is irrelevant. The data doesn’t care what the words MEAN, which is why it’s called LATENT Semantic Analysis. As I said in the lecture, that’s what makes it a potentially useful tool for the VMS.

And “is it really codicology”? Of course it is! Anything that has to do with the structure of a manuscript is codicology.

When our article comes out, the code and data will be available to all in a GitHub repository, and I hope that this data will help us all keep moving forward.

It is a VERY rare term (in fact, I’ve never seen it used in English before), but it is not the same as a singleton, and the two terms shouldn’t be used interchangeably.

Nick,

Thanks for this review. Informative and helpful as ever.

Glad my hope of yesterday was realised – the substantial, original, research from you and Wladimir were acknowledged.

I do like the ‘by-bifolium’ opinion. I like it very much.

Lisa Fagin Davis: here’s what you said in 2022:

https://ciphermysteries.com/2022/04/01/voynich-paper-suggestion-1-dna-gathering-analysis#comment-459241

The evidence of the Voynich Manuscript itself (with its unusual vellum, unusual finish, unusual foldouts, etc) is that its maker did not follow your hypothetical manuscript construction playbook. The bifolio DNA information offers us what I think is an exciting possibility of finding unseen links between bifolios, that we all agree have ended up bound out of their original order. Though I agree it’s possible that this process may ultimately come to nothing, my opinion is that ducking the question completely remains a lousy way to do either science or history.

All the same, I’m heartened that you have changed your position about the importance of trying to reconstruct the Voynich Manuscript’s original ‘alpha’ state. I now look forward to the day when you change your opinion about the (I think very high) potential value of bifolio DNA testing, and become an advocate for it being carried out, rather than a gatekeeper blocking it. Your voice would be a positive one there.

Lisa Fagin Davis. Codicology is weak in deciphering MS 408. It cannot decipher the text of the manuscript. Someone who knows how to code should or must work on the research. So codicology is useless. As we all see, when you haven’t moved an inch in the research of the manuscript for 20 years. At the beginning of the manuscript folio 1v it is written: I was born in 1466. And the year is encrypted in a symbolic plant. (The text says: there are 14 green leaves. And there are six and six gold leaves). A substitution is drawn on folio 2r. Specifically: Homophonic substitution. The author of the manuscript shows everyone who can think logically with folio 2r how the manuscript is encrypted. What more could a scientist want? The author shows the cipher. And at the same time shows the year when he was born. The entire manuscript is written in the old Czech language. There are also a few Polish and Latin words in it. I tried all 3 artificial intelligences. And not one of them can translate and decipher the text of the manuscript. The AI itself wrote to me that not even a quantum computer can do it.

If you’re talking about trying to reconstruct the animal skins based on relationships between the DNA of different bifolia, sure, that would be interesting, but in truth that won’t necessarily help establish textual or structural relationships between those bifolia. See, for example, this blogpost, where I show an example of two bifolia that appear to the cut from the same skin but aren’t adjacent in the final codex:

https://manuscriptroadtrip.wordpress.com/2021/02/04/reverse-engineering-the-codex/

I was actually disappointed by this outcome, as I had always assumed/hoped that bifolia from the same skin might end up adjacent in the stack of blank sheets and therefore adjacent in the manuscript. But alas, at least in this example, that wasn’t the case.

I’m a little offended that you consider me a “gatekeeper” who is blocking progress by not advocating to the curator. In fact, I had lunch with her and Yale conservator Paula Zyats just a few months ago (the new curator is a friend and mentee of mine whom I have known for some time). I broached this very idea as well as more MSI testing, more XRF, micrometer measurements of bifolia thickness, and other ideas. She is not willing to devote time/resources to these ideas at the moment, for many reasons including her status as a new (and somewhat lower-on-the-hierarchy-than-Ray-was) curator, concerns about the risk/reward of the significant handling of the manuscript and light exposure these ideas would require, major staff cuts, and current funding/time priorities of the library. So it will not help our cause for me to continue to bring it up in the short term, although I’m hopeful that she will eventually change her mind. We’ll see.

Nick, Lisa,

If I may add my twopence worth…

Even if DNA testing were possible and at present (as Lisa rightly says) it isn’t, then at the very best, and only *if* all the vellum came from locally-slaughtered animals, you might suggest where the folios had been inscribed.

And while that might be a useful indicator if a manuscript had been made in a ninth-century monastery, it isn’t for Europe during the fourteenth or fifteenth century. I looked into this question, and raised the ‘pecia’ possibility some time ago and used the example of what happened when the Papal court was planning a return to Rome. Most of the books were left behind but even copying the relatively few wanted, plus the main documents, brought vellum, prepared and cut into quires, from a network of parchminers across much of Europe. That was in the 1370s. By the early fifteenth century, you have a regular network of trade in paper, vellum and parchment passing to-and-fro across the Mediterranean. So if the vellum was acquired from a stationer – which would be common enough by the early decades of the fifteenth century, especially if the town had a chancery or a University – then the probability is high that any such DNA analysis would form a patchwork across Europe – and there’s no guarantee (pace Davis) that the scribes were in Europe. It’s not as if people couldn’t travel or iron-gall ink on vellum was unknown elsewhere.

What I’d really like to know is whether, when the manuscript was disbound for the first ‘official’ facsimile, the binding threads were kept. I want to know when the quires/bifolia were first bound… among other things.

Lisa Fagin Davis: I remember that post, yes, but I’m far from convinced that your reconstruction of how a single German monastic scriptorium happened to work on one day in the 12th century is as universally applicable as you seem to think.

I’m sorry that you felt a little offended by my characterising you as a gatekeeper as far as bifolio DNA testing goes: I was only going on what you replied to me here, which seemed to be a long litany of (what I still think are basically incorrect) reasons why it shouldn’t be done. I have previously heard from many other sources that you are an enthusiastic, open-minded advocate of all kinds of physical testing, and I’m delighted to find out that this was indeed the case.

Diane – the manuscript wasn’t disbound when it was imaged for the facsimile.

I still like the idea of isolating a sample of the author/scribe/illustrators DNA by looking for this DNA in a place, like under the ink, where it would not be mixed in with more recent DNA.

**Lisa, allow me to offer a different perspective.**

After more than twenty years of codicological research into MS 408, the focus remains on material hypotheses, while the manuscript’s actual text remains undeciphered. Codicology may help reconstruct the physical structure, but it lacks the tools to unlock the content. And it is the content that holds the key.

On folio 1v, the author clearly encodes his birth year: 1466. The symbolic plant contains 14 green leaves and two groups of six golden leaves. On one green leaf, two letters are written: J and T. According to Jewish homophonic substitution:

– Number 1 = a, i, j, q, y

– Number 4 = d, m, t

J + T = 14, which matches the number of green leaves. This confirms the encoded year. Anyone familiar with cryptography and Jewish substitution ciphers should recognize this immediately.

On folio 2r, the encryption method is illustrated—specifically, homophonic substitution. The author shows, to anyone who can reason logically with folio 2r, how the manuscript is encrypted. This is not speculation, but a verifiable cryptographic structure.

The manuscript is written in Old Czech, with elements of Polish and Latin. It is not an unknown language, but a historical linguistic layer that can be decrypted. I’ve tested all available AI systems—none could translate the text. Even AI itself admitted that not even a quantum computer could do it. That only confirms: without the cipher key and linguistic context, the manuscript remains unreadable.

Instead of further hypotheses about “singulions” or water stains, we should focus on what the author is actually communicating. The manuscript is not a riddle without a key. The key is embedded within—it simply needs to be seen.

I saw a documentary about the discovery of the body of Richard III where they proved it was him using DNA from a living descendent. If the author/scribe/illustrators DNA could be isolated then maybe using techniques like genetic genealogy it would be possible to identify the individual. One really needs to identify a site in the manuscript where that DNA is most likely to be found and not contaminated by other later DNA.

There must be a location or locations in the manuscript where only the author/scribe/illustrators DNA can be found. I wonder if this has been done with other manuscripts or documents.

Mark Knowles: the only place I can think might still have original DNA would be any original threads (when you pull tight on a thread, your skin sheds slightly), but I think those have long gone (unless Hellmut Lehmann-Haupt kept any?) And anyway, it would be the DNA of the binder, not of the maker. So I’m not hugely optimistic, alas.

If isolating the author/scribe/illustrators DNA is possible then it will probably have to wait until there are further advances in the technologies available for doing this kind of thing.

Mark Knowles you could search here: This is the Austrian Hardegg family. What is important here. Look at who founded the family. The founder of the family was: Vítek II. The link also says when Eliška married Henry of Hardegg. On the Internet you will surely find a photo of a painting in which Eliška’s grandson is painted. Julius II. of Hardegg.

https://cs.wikipedia.org/wiki/Hardekov%C3%A9

Nick, I am a bit surprised by your impression that Lisa’s (and Colin’s) ongoing research might not be contributing something new and noteworthy to the field. From your comments (for example, “The problem … is that she’s been trying to use text similarity metrics”), it sounds like there may be some misunderstanding about how the LSA technique actually works.

Of course, as with any method, these analyses can be misapplied—and that does happen quite often—but I didn’t see any indication of that in Lisa’s lecture. It’s true that Lisa and Colin may still need to include more control cases to ensure that their approach and findings are both rigorous and statistically sound, but we’ll need to wait for the published results before making that judgment. I for one am hopeful and very excited to see their full results become available.

Lisa,

Thank you for the correction.

Andrew Steckley: in general, I think whenever you try to use a thing from outside your field to make a judgment about things inside your field, you have to be aware of the risks – specifically, that the evidence you’re trying to bring in is strong enough to make that transition. In this instance, the question is about whether LSA metrics are strong enough to allow Lisa Fagin Davis (or indeed anyone else) to draw inferences about the bifolio ordering etc. It would be nice if they were (and indeed people have been calculating all kinds of Voynichese word metrics since at least the 1970s for these [and many other] reasons), but… let’s be realistic, because of the huge relative size of the Voynichese dictionary, it really feels to me like a number of confounding mechanisms must be getting in the way of this.

In the end, my judgment is that Voynichese is [effectively] so ‘noise-riddled’ that we just can’t get to reliable enough inferences by applying LSA (or any other word-based metric) to it. This really isn’t to say that Colin or Lisa are doing something wrong or bad, but rather that, in such extrema as Voynichese, a heartfelt desire to discern signal from noise isn’t enough to achieve that result. I don’t honestly believe that more control cases will help here. But maybe don’t take my word for any of this, ask Rene Zandbergen as well, because I’m pretty sure that he went through a number of similar mills 20 years ago, and ended up drawing broadly the same conclusions as me here (as I recall).

I agree — except for the suggestion that anyone has actually applied anything like LSA to the Voynich pages before. In theory, LSA could detect semantic relationships at a finer scale than any of the topic analysis methods I’ve seen used by people on the VMS so far. That said, Lisa and Colin are stretching the limits of the technique by assuming it can pick up semantic continuity at a sub-page level. (And that’s aside from the ‘noise-riddled’ problem.)

But a key flaw with all the topic analysis attempts I’ve seen on the VMS is that those methods are all designed on the assumption that a document has topic segmentation to begin with. In other words, if a document genuinely contains distinct topics, those algorithms can be used to find and separate them. But if the text doesn’t actually have different topics, the methods will still produce the “best possible” divisions they can — essentially forcing artificial boundaries that may not reflect anything real. None of the attempts I have seen have understood the need use control cases to establish the validity of the approach for the case of the VMS.

In that sense, the LSA approach stands out as something meaningfully different — and potentially more promising — than the previous topic analysis efforts.

Hi all,

I was puzzled about why Nick wrote,

“To be fair, she did invest a little bit of time in the presentation rubbishing my Curse of the Voynich reasoning that (at least) one of the bifolios in Q13 has been bound back to front (or inside out, depending on how you look at it). But because my reasoning there was flawless, I can only deduce that her evidence against it is marginal (and wrong). *laughs*

I think it’s another instance of one person criticising work done without wanting to name names – and so another person reads it as criticism of their own.

Obviously this is just a guess, but I’ve recently had correspondence from a newcomer who claims every second image in the vegetal section is upside down, and says for example that the image on f.9v, when inverted, becomes a drawing of the female anatomy and more exactly the reproductive organs. Also involved are ideas of “rich women’s rituals” the Medici and Lucretzia Borgia.

Christine (or Kris) is gripped by enthusiasm and sharing her paper by all means available to her. So – as I said I’m guessing – but perhaps that’s the material Lisa was thinking of, not Nick’s. Maybe.

Diane: LFD did specifically refer to my 2006 theory that one of the Q13 bifolios ended up bound back to front, presumably because (though I haven’t yet checked) her singulion theory explains the same observations but without requiring the inversion. Details to follow in her paper.

Andrew Steckley: many, many ships have already been sunk on the rocks of a presumption of semantically meaningful Voynichese wordfulness, so I can’t help but wonder whether Colin and Lisa have been lured by the same sirens.

**Comment for Andrew Steckley:**

Mr. Steckley, your study on token distribution in MS 408 may appear statistically elegant, but it reveals a fundamental misunderstanding of the nature of the manuscript. Analyzing the Voynich Manuscript without knowledge of its cipher system is like measuring the depth of a river without water.

The manuscript is written in a homophonic substitution cipher, clearly demonstrated on folio 2r. Without understanding this system, one cannot grasp the meaning, structure, or linguistic logic of the text. The manuscript is not composed in an unknown language, but in Old Czech, with Polish and Latin elements. This is not a hypothesis—it is the result of over thirteen years of research.

Your analysis relies on Zandbergen’s outdated transliteration, which does not reflect the true structure of the glyphs. The EVA alphabet is methodologically flawed and leads to false conclusions. Moreover, your study fails to address a basic question: is the text read left-to-right or right-to-left? Without resolving this, no serious analysis can proceed. This omission alone shows that your research method stands on shaky ground.

On folio 1v, the author encoded his birth year (1466) using a symbolic plant. This is no coincidence—it is a deliberate cryptographic act. The manuscript itself offers the key; it simply needs to be recognized. If research continues to ignore these foundational clues, the manuscript will remain misunderstood.

It is time for Voynich research to move beyond statistical illusions and engage in genuine decipherment.

The whole point is that we AREN’T assuming that the text is meaningful at all. The data speaks for itself. And I certainly wasn’t “trashing” anyone. New data leads to refinement, all of which moves the work forward. That can only be to the good.

Lisa Fagin Davis: the point I’m trying (and clearly failing) to make is that every single word-based analysis of Voynichese over the last 50+ years has failed to find ways in which they behave like ‘normal’ words. Even the best studies only find new ways in which ‘the word model’ fails to please.

Ever since the late Stephen Bax started actively encouraging people to put forward their naive linguistic takes, a lot of Voynich research has been circling that whole ‘vord’ drain. And LSA only seems to hope for the best, not tackle the real issue of why word analyses always – and I do mean always – fail.

Nick, have the rocks of semantically empty Voynichese claimed any fewer ships? In the end, the number of wrecks matters little beside the ship that slips safely through the Straits of Voynich. But you seem to be concluding that because many ships have attempted and sunk, there is therefore no open sea to be reached beyond the passage. I can’t see how that follows.

And Lisa is correct — LSA does not presuppose that a text is meaningful, only that its words exhibit patterns of co-occurrence reflecting local relationships across a sequence. Such patterns often emerge naturally in coherent, meaningful prose, but they can equally well arise from an artificial language generator. If L & C can detect these patterns, the next step is straightforward: to test whether the observed structure is statistically significant or merely a product of chance. As I recall, Lisa presented some data in her talk showing that they are correctly attempting to assess that their results are significance.

You say “…LSA only seems to hope for the best, not tackle the real issue of why word analyses always – and I do mean always – fail.” ? That’s awfully prophetic. Every attempt to navigate the Straits of Magellan also failed … until one didn’t.

Prof Zlatoděj: Actually it’s Dr. Steckley. And, with all due respect, your comment demonstrates a profound misunderstanding of both the purpose and methodology of the study. The analysis of token distributions does not depend on speculative claims about language or cipher; it addresses statistical properties observable regardless of interpretation. Invoking unverified assertions about a supposed Old Czech cipher only underscores how far outside your depth you are in commenting on this type of quantitative research.

Andrew Steckley: all I’m saying (which appears to be quite different to what you think) is that I’m bored to tears by reading word-based Voynichese analyses that start out hopeful, but ultimately deliver practically nothing. My genuine desire is for researchers to not keep returning to the Voynich Manuscript to apply unbelievably clever semantically-based toolkits (and I think it’s fair to include LSA in that category), but instead to use their brains and guile to try to understand quite why it should be that Voynichese words keep failing them.

Something is wrong with the bigger research picture here, and pitching it (as your comment does) as somehow a binary choice between meaningful and meaningless (Scylla and Charybdis?) comes across as overly reductive. There are plenty of ways in which Voynichese can have latent semantic content but where LSA’s toolkit fails to see it clearly enough. And there are also plenty of ways in which other (more metadata-like) things in the Voynichese text can be identified by LSA as having semantic content despite not actually relating to the meaning of the text in any useful way. I would be unsurprised if many things that flag up as similarities relate more probabilistically to the kind of gradual evolution in the internal structure of Voynichese that Glen Claston used to talk about (and which Rene Zandbergen still does), and not actually to the meaning per se.

Perhaps we need to think more broadly about what a piece of writing can record. It seems to me that everyone expects the Voynichese text should prove, ultimately, slabs of consistently spelled grammatical prose or poetry.

I don’t usually speak about the written text because neither palaeography nor cryptology is my field, and I appreciate how annoying it can be to have amateurs offer ‘bright ideas’.

With that said, I might add that when I did look for similar patterns at one stage I found they came closest to strings of abbreviated technical instructions. I think the examples I used were knitting patterns and weaving instructions (though now we use grid-paper for that). I was on the verge of getting a Museum curator a specialist in textile analysis to give an opinon on whether a couple of pages *could* be rendered as a pattern in fabric – preferably one attested for the fourteenth or fifteenth century, when she insisted I name the “medieval manuscript” in question. The word ‘Voynich’ ended that conversation right there.

nuscripts.

Isn’t the usual rule of thumb that ciphers are employed to protect political or mercantile interests?

Just a thought and not a new one. A broader definition of ‘text’

**Comment for Dr. Andrew Steckley:**

Dear Dr. Steckley,

Thank you for your response. I fully acknowledge your academic title, and precisely because of that, I find it necessary to highlight a fundamental distinction between quantitative analysis and substantive research into MS 408.

Your study of token distribution may be statistically elegant, but without understanding the cipher system, it remains methodologically isolated. The Voynich Manuscript is not a generative text—it is a cryptographic document. On folio 2r, the author explicitly demonstrates the method used: **homophonic substitution**. On folio 1v, he encodes his birth year (1466) using a symbolic plant, with 14 green leaves and two sets of six golden leaves. This is not poetic ornamentation—it is a cipher.

To analyze the manuscript without recognizing its encryption system is like measuring the depth of a river without water. The text is written in **Old Czech**, with elements of Polish and Latin. This is not speculation—it is the result of over thirteen years of research and decryption.

Your analysis relies on Zandbergen’s EVA transliteration, which is structurally flawed and leads to false conclusions. Moreover, your study does not address a basic prerequisite: the direction of reading. Is the text left-to-right or right-to-left? Without resolving this, no serious linguistic or statistical analysis can proceed.

The manuscript is not an unsolvable riddle. The author provides the cipher and the key—clearly and deliberately. What is needed is not more statistical modeling, but the ability to recognize what is already there.

I invite you to engage in a deeper dialogue—not about distributions, but about meaning. The manuscript speaks. It simply requires someone who can listen.

Respectfully,

**Josef Zlatoděj**

Independent researcher and cryptanalyst

I should add to the above that what I sent that curator were not pages of Voynichese, but Julian Bunn’s colour-coded pages. It was those which got me thinking about forms of non-standard and technical text.

Others I thought about include ephemerides, telephone books (remember them) pages from Ptolemy’s tables… and so on.

Though I don’t expect the surviving medieval Latin corpus likely to provide useful comparative texts or even many useful analogies – to judge from the glyphs and images.

Nick: I understand that you’re mainly expressing boredom rather than outright dismissing all such efforts, but I still think your concerns are somewhat misplaced. I assume that by “clever semantically-based toolkit” you mean the various NLP, algorithmic, and statistical methods developed in Data Science—as opposed to linguistic analyses of morphemes or mappings to source languages. Yet, I haven’t seen any attempt that actually uses such technical analysis to extract semantic meaning. The goal has generally been to test whether semantic content exists in the Voynichese—a far more modest aim than deciphering its meaning. In that sense, these studies don’t “fail” as you suggest; they simply yield some level of evidence either for or against the presence of meaningful content.

I agree that that isn’t a binary issue. There could well be a mixture of semantic signal and meaningless noise, or any point along that continuum. But that doesn’t invalidate—or doom—an effort to determine whether some semantic content is present. (That said, I think that many of these algorithmic approaches have been poorly executed.)

Your critique that “Voynichese words keep failing them” seems to rest on the assumption that the goal is to interpret the text. As far as I know, no current technique could achieve that. If the expectation is an actual “translation,” disappointment is inevitable.

More importantly, in the context of Lisa’s work, she and Colin are applying NLP methods for codicological purposes—specifically, to try to infer the original ordering of the folio pages. I don’t believe they expect their analysis to produce a translation of the Voynichese; in fact, I’m quite certain they don’t.

Dear Dr. Steckley,

Thank you for your response. I fully acknowledge your academic title, and precisely because of that, I find it necessary to highlight a fundamental distinction between quantitative analysis and substantive research into MS 408.

Your study of token distribution may be statistically elegant, but without understanding the cipher system, it remains methodologically isolated. The Voynich Manuscript is not a generative text—it is a cryptographic document. On folio 2r, the author explicitly demonstrates the method used: **homophonic substitution**. On folio 1v, he encodes his birth year (1466) using a symbolic plant, with 14 green leaves and two sets of six golden leaves. This is not poetic ornamentation—it is a cipher.

To analyze the manuscript without recognizing its encryption system is like measuring the depth of a river without water. The text is written in **Old Czech**, with elements of Polish and Latin. This is not speculation—it is the result of over thirteen years of research and decryption.

Your analysis relies on Zandbergen’s EVA transliteration, which is structurally flawed and leads to false conclusions. Moreover, your study does not address a basic prerequisite: the direction of reading. Is the text left-to-right or right-to-left? Without resolving this, no serious linguistic or statistical analysis can proceed.

The manuscript is not an unsolvable riddle. The author provides the cipher and the key—clearly and deliberately. What is needed is not more statistical modeling, but the ability to recognize what is already there.

I invite you to engage in a deeper dialogue—not about distributions, but about meaning. The manuscript speaks. It simply requires someone who can listen.

Respectfully,

Josef Zlatoděj

Independent researcher and cryptanalyst

Andrew Steckley: yes, the quality has been variable in the past, though I would also add that in the last five years I have seen an uptick in well-executed Voynichese word-based analyses (even though none has achieved even remotely what its author(s) initially hoped). “Voynichese words keep failing” their high hopes: the mixture I refer to is not between semantic signal and noise, but between raw semantic text and a text confounded by a carefully arranged selection of tricky factors (such as steganography, transposition ciphers, verbose ciphers, abbreviation, etc) that our clever software has to try to get around before it even yields a ‘flat’ (uniform) text. The proper goal, then, is not yet to read the text but to find ways of undoing one or more layers of whatever has been done to it, to try to make it more uniform.

I know what Lisa and Colin are doing, but as I said in my post, something with that many leaps of faith propping the reasoning up sure ain’t codicology. But all the same, if it’s indicative of where codicologists might look next (e.g. the sequential singulion hypothesis), then that’s interesting… but it’s not codicology, rather it’s a prompt for codicology.

Nick: “not codicology, rather it’s a prompt for codicology”. Well now we ARE talking semantics 😉

Andrew Steckley: so, academic precision is good when you’re doing your own PhD, but when someone else uses it it’s semantics? Got it.

A. Steckley: I will tell you, doctor, why you can never understand the text of MS 408.

1. It is written in the old Czech language.

2. You will never find out the direction of its writing. (from the left? or from the right?)

3. You do not know where a word in the manuscript text begins or ends.

4. You do not know the Jewish homophonic substitution cipher.

5. You do not know the dialect and speech of the author.

So, doctor, these are the main things that need to be known for any educated doctor to be able to decipher the text of the manuscript. Without knowing what I have written here, no one will ever be able to decipher the text of the manuscript.

Best regards, Josef Zlatoděj.

PS. I translated the entire manuscript and so I know what is written in it and what its meaning and purpose are.

Nick: No one has suggested that. Rather, trying to differentiate “codicology” from “prompt for codicology” in this situation isn’t academic precision — it’s semantics. And it shows again that you are misunderstanding what L&C are doing and how they are using a particular tool. In fact — to be precise … academically — they aren’t using LSA to do a linguistic anaylsis to read the script, nor for cryptanalysis to decipher it. They are using it (and so far, indications are that they are using it correctly) to infer the physical structure of the manuscript. That’s codicology. If their analysis succeeds, they will know nothing more about what the Voynichese words say. They will know nothing more about if or how it incorporates a cipher. They will not even know if it contains semantic meaning or not. And they expect none of that. They will simply have evidence for the originally intended ordering of the folios. That’s not a prompt to then begin doing codicology. It’s already a codicological endeavor.

“Codicology” literally means anything having to do with the structure of a manuscript. So yes, of course what we’re doing is codicology. And as Andrew pointed out, we aren’t trying to “solve” the manuscript with this work. We’re trying to hypothesize the original sequence of folios and bifolios, which hopefully will then help those of you who ARE trying to “solve” it. You can’t “read” a book whose pages are out of order.

Nick: No one has suggested that. Rather, trying to differentiate “codicology” from “prompt for codicology” in this situation isn’t academic precision — it’s semantics. And it shows again that you are misunderstanding what L&C are doing and how they are using a particular tool. In fact — to be precise … academically — they aren’t using LSA to do a linguistic analysis to read the script, nor for cryptanalysis to decipher it. They are using it (and so far, indications are that they are using it correctly) to infer the physical structure of the manuscript. That’s codicology. If their analysis succeeds, they will know nothing more about what the Voynichese words say. They will know nothing more about if or how it incorporates a cipher. They will not even know if it contains semantic meaning or not. And they expect none of that. They will simply have evidence for the originally intended ordering of the folios. That’s not a prompt to then begin doing codicology. It’s already a codicological endeavor.

Lisa Fagin Davis / Andrew Steckley: for me, codicology is forensic, evidence-based, physical investigation. In this context, are LSA analyses hard evidence or just suggestive? If you think the former, you’re kidding yourself, honestly. Every study analysing Voynich words sits precariously close to pareidolia, and that’s not a good place to be.

I don’t honestly see what’s so wrong-headed about what I’m saying. Show me the physical evidence you have found as a result of considering the conclusions suggested by LSA, and we’ll be talking proper codicology again. But for now, it’s not codicology, not by a long shot.

Nick, Andrew

Talking semantics and precision..

Couldn’t see at first why Lisa’s constant use of “analogous” was bugging me.

Then a friend asked “why doesn’t Dr. Davis just say x is like y, or is similar to y?’ and I got it-

Lisa’s using that word because it creates a tacit assertion that the two things are very much alike when there’s no evidence to allow it.

It’s a kind of meme, something that sounds ok and serves to create an atmosphere of belief for a thing unsupported by evidence and even positively opposed by the balance of evidence.

So it’s a kind of meme – sounds good and convinces by less than direct or honest means. I I don’t suppose Lisa invented this fox-y practice, but has absorbed the habit among a sub-set of Voynich theorists.

English is an odd language. The noun ‘analogy’ admits things compared have nothing very much in common. . ‘Life is like a box of chocolates’.

But the adjective does the opposite. It tacitly asserts the very opposite – that the things compared are basically very similar indeed. You really could say, in those cases, that ‘a is like b’ for example – “the construction of a bat’s wing is like/ analogous to that of a bird’s wing’.

But in Lisa’s video, ‘analogous to’ is badly misused. In the absence of supportive evidence, proof, or even a reasonable case. Basically, it works as propaganda does, creating belief without respecting the hearer’s right to understand why they should believe something true.

I went back to the video, substituting the word ‘like’ for ‘analogous to’ and the fudging was so much more obvious.

Why do people say ‘just’ semantics?

Watch the video, Nick. There is lots of physical evidence to support our results. And don’t tell me what codicology is *for you*. You don’t get to decide what that term means. What we’re doing might not be what *you* are interested in in terms of studying the materiality of the manuscript, but that doesn’t make it “not codicology.” You are an expert crytologist. I am an expert codicologist. I don’t do linguistics or cryptography, and you shouldn’t claim to understand codicology. We are all better off when we stay in our respective lanes. That’s why I collaborate with computer scientists, linguists, cryptologists, and material scientists. I know where my lane is. You should do the same.

About Lisa’s experiment. I’m inclined to agree it may well tell us the order in which the inscribed pages were completed, but cannot prove the pages constitute the text of an original, coherent and consecutive book- text.

It’s fairly obviously a florilegium/complilation in my view. The style of drawing varies substantially between the very few ‘Latin’ style images or details (calendar-centre motifs; ‘castle’ detail and one or two others, then what I’ve come to think of as the ‘northern’ group, which include anthropoform figures made to look unlike living people, and the vegetal section – which definitely eschews any form that could be considered an effort to ‘imitate’ a living thing. Since anti-iconism is native to Arabia and spread thence, ultimately, as far as Constantinople and to what is now Afghanistan, but was never known in Latin culture, it’s thought-provoking.

Lisa F. Davis,

Your statement that “you cannot read a book whose pages are out of order” may sound compelling, but it does not reflect the reality of MS 408. In fact, the opposite is true: **if you understand the language, the cipher, and the content**, you can reconstruct not only the page order but also the original logic of the manuscript.

The Voynich Manuscript is not written in an unknown language—it is composed in **Old Czech**, with elements of Polish and Latin. On folio 1v, the author encodes his birth year (1466) using a symbolic plant:

– 14 green leaves

– Two sets of six golden leaves

– One green leaf marked with the letters J and T, which equal 14 in Jewish substitution

This is not decorative—it is a cipher.

On folio 2r, the encryption method is illustrated: **homophonic substitution**. The author shows, clearly and deliberately, how the manuscript is encrypted. These are not hypotheses—they are verifiable cryptographic structures.

Without understanding the content, no reconstruction of folio order can be meaningful. Material clues (stains, paint transfer, parchment size) may assist, but without deciphering the text, they remain **contextless**. Codicology without cryptology is like a map without a compass.

The manuscript speaks. And when you understand its language, it reveals not only its structure—but its purpose.

Josef Zlatoděj

Nick: Are the shapes on Mount Rushmore the result of intentional depiction of US presidents, or are they the result of natural erosion? One could claim that no amount of physical investigation of the rocks and cliffs themselves will produce “hard evidence” that we are looking at human-designed sculptures. Fortunately, that is not how rational science is performed. We can (and do in almost every area of scientific investigation) base conclusions on probabilistic considerations — pareidolia can be ruled out if and, when the probabilities are overwhelmingly in favor of an intentional design, any reasonable person could consider it to be hard evidence. (No kidding actually!) Many would even call it “proof”.

In this case, there are well-established analytical methods capable of producing exactly that kind of evidence—and L & C seem to be using them. One standard approach is to compare results from multiple shuffled versions of the folio pages. This produces a distribution of quantitative scores—say, cumulative cosine similarity values—measuring how much coherent patterning each random order produces. If the score for one particular ordering falls deep in the tail of that distribution, the probability of such a result occurring by chance can be specifically quantified. If that probability is sufficiently low, then yes—that’s solid evidence of an intentional design by the manuscript’s authors.

It’s not rocket surgery, but proper validation is essential (and too often neglected). If L & C present a proposed reordering without demonstrating that their result is statistically improbable by any other scenario, I’ll be the first to raise concerns and call for further testing—time permitting. But based on what Lisa has shown so far, there’s no reason to expect they’ll fall short on that front.

When these analyses are performed competently, there’s simply no reason for them to veer anywhere near pareidolia.

L.F. Davis and Andrew Steckley.

Voynich research often gravitates toward the material: parchment thickness, stains, binding threads. But MS 408 is not a mute object. **It is a text. And texts are meant to be read.**

On folio 78, many see “pipes” or “tubes.” But this is not a hydraulic diagram. It is a **genealogical vein** — a symbolic conduit of lineage and memory. The drawing shows a vein split into **6 + 1 branches**: six daughters and one mother.

In Czech, the word *žila* (she lived) and *žíla* (vein) are spelled identically — **diacritics define meaning**. This is not coincidence. It is cipher.

Material analysis may assist conservation, but without deciphering the text, it remains **contextless**. The author did not draw stains. He wrote encrypted Old Czech, with Polish and Latin elements, using **homophonic substitution**.

He left a cipher, a birth year, and a symbolic system. It is all there — **if one reads**.

Codicology without cryptology is like admiring the cover of a book while refusing to open it.

Josef Zlatoděj

Independent researcher and cryptanalyst

Nick: Are the shapes on Mount Rushmore the result of intentional depiction of US presidents, or are they the result of natural erosion? One could claim that no amount of physical investigation of the rocks and cliffs themselves will produce “hard evidence” that we are looking at human-designed sculptures. Fortunately, that is not how rational science is performed. We can (and do in almost every area of scientific investigation) base conclusions on probabilistic considerations — pareidolia can be ruled out if the probabilities are overwhelmingly in favor of an intentional design. Any reasonable person could consider it to be hard evidence. (No kidding actually!) Many would even call it “proof”.

In this case, there are well-established analytical methods capable of producing exactly that kind of evidence—and L & C seem to be using them. One standard approach is to compare results from multiple shuffled versions of the folio pages. This produces a distribution of quantitative scores—say, cumulative cosine similarity values—measuring how much coherent patterning each random order produces. If the score for one particular ordering falls deep in the tail of that distribution, the probability of such a result occurring by chance can be specifically quantified. If that probability is sufficiently low, then yes—that’s solid evidence of an intentional design by the manuscript’s authors.

It’s not rocket surgery, but proper validation is essential (and too often neglected). If L & C present a proposed reordering without demonstrating that their result is statistically improbable by any other scenario, I’ll be the first to raise concerns and call for further testing—time permitting. But based on what Lisa has shown so far, there’s no reason to expect they’ll fall short on that front.

When these analyses are performed competently, there’s simply no reason for them to veer anywhere near pareidolia.

Lisa Fagin Davis: so, it seems that on the one hand codicology is as all-encompassing as you need it to be to support whatever your argument of the day is, whereas on the other everyone else should stay in their narrow technical lane. Right. It seems I’ve learnt a new technical term today, which is “lanesplaining”.

Yes, I did watch your video. I’m starting to wish I hadn’t.

Nick: Sorry, about this. I just mentioned the video as Diane asked about it. I haven’t seen it as it isn’t relevant to my current line of Voynich research, but I didn’t anticipate it causing so much tension.

Lisa Fagin Davis : I appreciate your acknowledgment that codicology alone cannot decipher the manuscript. That’s an important step forward.

>

> However, I believe it’s time to broaden the discussion. There are several medieval manuscripts with strong ties to the Czech cultural sphere that have been misrepresented or misunderstood in academic circles. One example I would highlight is the *Aberdeen Bestiary*.

>

> Scholars often treat it as a symbolic zoological text, but in reality, it is encrypted using Jewish homophonic substitution — the same system found in the Voynich Manuscript and the Rohonc Codex. When decrypted properly, it reveals layers of Czech historical narrative.

>

> Codicology is a valuable discipline for understanding structure and materiality. But when it comes to grasping the encrypted meaning of the text — especially when the cipher is linguistic and genealogical — codicology alone is not sufficient.

>

> We need to integrate cryptographic, linguistic, and symbolic analysis to truly understand what these manuscripts are saying. Otherwise, we risk repeating the same surface-level assumptions for another hundred years.

Lisa Fagin Davis: after reading many of your academic articles across various journals, I’ve come to the conclusion that you stand at the top of the pyramid of scholars who have spent decades trying to explain the Voynich Manuscript to a broader, non-specialist audience.

>

> However, I believe someone else should occupy that peak — namely, the author of this blog, Nick Pelling. As a skilled cryptanalyst and expert in historical ciphers, he has the strongest qualifications among your group to decipher the Voynich text.

>

> Let me give you a concrete example that illustrates what even your best efforts have not yet achieved.

>

> A few years ago, you posted an image on Twitter and asked what the letters might mean. The image contained just two words: **otco otccq**.

>

> The first word is easy to read — even AI understood it quickly. It’s written in Old Czech and reads **otco**, which in modern Czech means **father**.

>

> The second word reads **otsca** — but to understand it, one must know homophonic substitution, phonetics, and dialect. In modern Czech, it reads **otska**, meaning **of the father**.

>

> Together, the phrase **otco otska** means **father of the father**, or simply: **grandfather**.

>

> On this folio, the author split the letter **M** by inserting a small **t** into each word. For the author, it made no difference whether he wrote **M** or two separate **t** letters — because, and this is crucial, in Jewish homophonic substitution, the number 4 corresponds to **D, M, T**.

>

> This is the meaning of those two words — and it clearly demonstrates the cipher, the linguistic structure, and the fact that the manuscript is written in Old Czech.

>

> I believe even a scholar without a university degree could understand this — if they are capable of logical reasoning.

Mark Knowles: don’t give it a second thought, I know I certainly won’t.

I really cannot see how someone can possibly expect to get some useful result from a latent semantic analysis applied to the Voynich manuscript.

As the term says, latent semantic analysis is a cluster analysis designed around semantics, that is around meanings. Natural languages are a flawed medium for meanings. Writing systems are a flawed medium for natural languages. In the best case scenario, latent semantic analysis works with very flawed data. In the case of the Voynich manuscript we can reasonably expect the worst of the worst cases: the writing system, which is already a flawed medium of a flawed medium of the real data, is twisted and distorted by a number of factors which further obfuscate meanings willingly or unwillingly, that is spelling mistakes, semantic mistakes, shorthand, steganography, cryptography etc.

A simple and simplified example using the Italian language and the common Italian writing system. The common Italian orthography has many homographs for completely different words, because Italian phonology has seven vowels, but the writing system usually employs just five symbols for them, because Italian phonology differentiates words by their primary stress, but the primary stress is not usually written down etc.

So we have the written word “ancora” for both “àncora” (“anchor”, primary stress at the beginning) and “ancòra” (“too, yet”, primary stress in the middle), “pesca” for both “pèsca” (“peach”, open pronunciation of “e”) and “pésca” (“fishing”, close pronunciation of “e”), “dotto” for both “dòtto” (“learned”, open pronunciation) and “dótto” (“duct”, close pronunciation) etc.

LSA gives different results for the same Italian texts written according to the usual way or written according to a stricter orthography. A standard text about fishing (“pésca” means “fishing”, “pésco” means “I fish”) could be easily hallucinated for a text about horticulture (“pèsca” means “peach”, “pèsco” means “peach tree”). The same texts cannot be confused by LSA if I use a stricter orthography. Different writing systems for the same language affects the results of a latent semantic analysis. Mix in shorthand, errors and obfuscation and see what possibly could happen.

Back to the Voynich. Before using a novel technique, one should test it. Luckly enough this can be reasonably done in the case of the Voynich: we have enough codicological evidence to sort Quire 13. The most probable folio sequence is: 76-80-82-84-78-81-75-77-79-83. Let’s test LSA against Quire 13 and let’s see what we get. If the resulting sequence is quite different from the one above, than we can safely ignore LSA and its results.

Mark Knowles,

There is no reason to apologise for sharing information about current research. More reason for those to apologise who don’t. Besides, how can you know what is or isn’t relevant to an understanding of the manuscript , its form and contents, until you’ve seen it for yourself?

On a personal note, one of the first signs all was no well in Voynich studies was when a certain Voynichero (not Nick) reacted with instant hostility when I asked members of a forum for information about previous studies on a certain Voynich topic. That person pronounced as if he were some official law-maker that “to cite precedents is unnecessary”, went on to order all present to “pay no attention” to my question (or indeed to me) and later told me when I spoke of Nick’s codicological observations that I shouldn’t bother with palaeography and codicology because they were “unnecessary” and “too complicated”. The same person now presents themselves as competent in both.

Back then, Nick was the only person willing to answer such normal queries as whether anyone had touched on a particular topic before (absolutely basic sort of question and Nick’s response basic scholarly ethics and courtesy).

People are actually so intimidated once in a group that they dare not be seen responding to outsiders. I consider it both sad and shameful that only here – and thanks to you – would such a question be answered. You, and on her own blog, by Ruby Novacna.

I hope the energetic discussion won’t make you feel that silence is better.

My own position – just btw – if what is said or written or broadcast i about the manuscript, it’s to be presumed relevant until tested and found otherwise. Active debate is part of the testing process and polite silence is evidence of censorship – tacit or overt.

I like what I’ve seen from Andrew Steckley, both here and elsewhere. I have always appreciated Nick’s ethical standards as a scholar and at least half of his work in ‘Curse’ (the non-Averlino half – sorry Nick) I consider a lasting and important contribution to the study.

If they want to debate the value of this method and whether or not it is ‘codicology’ why not? So long as the focus doesn’t shift from ‘codicology or not’ to a focus on Lisa as representative, it’s all illuminating and worth hearing. Only con-men rely on one-sided coin.

Anyway, thanks again Mark. Hope your work goes well.

Stefano Guidoni: I heartily agree, yet I’d also note (as I did in the post) that an LSA analysis might end up (probabilistically) with weak similarities based on metalanguage and evolving cipher system, rather than on meaning. Note also that Lisa Fagin Davis specifically mentioned in her talk that she felt her new analysis disproved my suggestion (from years ago) that one of the Q13 bifolios appears to have ended up reversed, but we’ll have to wait and see her paper when it comes out.

PS: nice anagram on your email address 😉 For my son, I came up with “enlargepixelland”, so you can almost certainly work out his first name. 🙂

Stefano Guidoni: You seem to be a little confused. LSA is not a cluster analysis technique. And sorry– but that pretty much summarizes the accuracy of the rest of your comments.

Stefano Guidoni: uh oh, the Precision Police are here, and they’re sounding pretty dismissive. Better throw in the towel now, OK? [*]

[*] Not my actual opinion.

Nick, Stefano: It’s ok. I’m just letting you off with a warning for now.

Andrew Steckley: thank you, Mr Policeman sir, I appreciate it. [*]

[*] Not my actual opinion

Andew, Nick Stefano,

This may be too soon but – honestly – thanks. Much learned.

That was a disappointing string of comments. I know nothing about LSA and its likelihood of leading us to the original page order, but I do know that these comments were uncharitable.

The worst that can happen is that it doesn’t work.

Bill: I thought I made a very clear point in the post (about how I thought using LSA wasn’t itself codicology, but that I thought it might possibly lead to some codicology), which led to a load of fairly uncharitable stay-in-your-lanesplaining.

All the same, I then went away and thought a bit more deeply about it all, which led to some actual (physical) codicology, though no doubt I’ll get lambasted for that as well: https://ciphermysteries.com/2025/10/25/jehan-le-begue-giovanni-alcherio-villela-petit-quaterni-fascicular-culture-and-lisa-fagin-davis

Caught up with some overdue literature, and also watched this video as recommended to me by a friend.

Absolutely incredible what Lisa, Colin, Claire Bowern, and many other experts have done in their time working on this project.

I have my thoughts as “”person with a hunch”” but I’ve been enjoying my time seeing all the developments and bits of new info unravel. Exciting stuff!! My background is strictly in plants (and managing *all* people) so my main interest mostly lies there in the drawings. I have a journal of the plants from VM and their corresponding species, if I can get down to specifics. After reading this looong winded thread, OOOF! Let’s let the respective experts do their thing, K?

Looking forward to the next pieces

-N

@N. Jackson,

You interest in the vegetal drawings leads m to ask a favour. I wonder if you are able to point me to a study which lists the plants known to Mesue the younger as useful in pharmacy?

I understand that Lisa and Colin will soon present a paper or talk (or something) setting out their subsequent work and findings on this topic.

I hope Nick will review it and that (as above) others will be willing to add their own perspectives.

It seems to me from the comments to this post that Nick is saying that while, *in theory* the use of LSA is supposed to help re-construct connectedness in the written text across various folios and/or quires, it won’t necessarily do that or, if it seems to, may return results distorted by cryptological factors as ‘noise’.

As a basis on which to suggest re-ordering of the physical, material, sheets, this also seems to me not codicology as such..

What happens if the LSA ‘order’ is contradicted by such things as different scribal hands, or obviously different stylistics for the drawings, or water-stains, or stitch-marks, or bleed-through, or impressed pigment. Those issues – it seems to me and Nick will surely correct me if my impressions are mistaken – are why Nick says the virtual mapping of Voynichese isn’t ‘codicology’.

From my point of view, the approach that Lisa and Colin took in the first video seems risky and a little dubious for quite other reasons. First, though it is not unusual for medieval manuscripts to be disordered, the normal practice is to identify and compare the written text(s) with the text(s) the scribes were working from, and reconstruct the proper folio order on that basis. (Alin Suciu’s work is the very model for research of that type, though he’s no Voynichero). Without knowing the sources, or being able to read Voynichese, Lisa and Colin are – it seems to me – constructing abstractions upon hypotheticals.

Maybe the upcoming . paper/lecture will quiet my doubts. Maybe Nick will review it more positively. What I feel concerned about is the possibility or probability that any inferences taken from those statistical results will be immediately elevated to the status of another ‘Voynich doctrine’ – asserted “what we know” and against which future researchers will have to waste weeks, even years, in refutation or efforts to correct false interpretation.

My other demur comes from my own background in a form of archaeological-historical studies and curatorial work: that is, we preserve the evidence as it is and do not ‘reverse’ the effects of time and change upon the artefact. In the case of the Vms, my initial or default position (which evidence can alter) is that whoever arranged the final binding of the quires into their current order intended that order – and part of our job is to understand *whether* this is so, and, if so, what intention informed those persons’ decisions and, if not, what circumstances could have brought about such disorder – is it the aftermath of a manuscript’s being sent to a printer (the binding threads might allow us to suggest when and where we might look for a printed work containing some or all). Is the result of some person (such as Kircher, for example), appropriating materials to suit his own interests? People gathering up important information as they prepare to flee plague, war or re-location?

Even a virtual ‘fixing’ of the primary evidence can be found later to have been based on false premises, inadequate data or tools and so on. Ask any modern conservator of ancient artefacts and buildings about the horrors they face in having to undo efforts made before them to ‘reconstruct’ and ‘fix’ damage or disorder.

I’m not so much pessimistic as wary.. I do hope for a range of opinion and discussion if Nick does decide to review the upcoming talk, or paper… One does miss the lively exchanges of previous years that gave life to the first mailing list and to various Voynich blogs, of which Nick’s is I think the longest to survive.