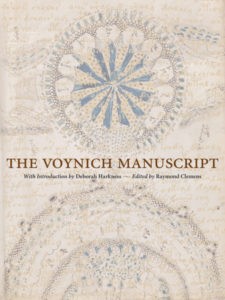

Since the recent release of the Yale University Press photo-facsimile, a number of quite different takes on the Voynich Manuscript have appeared online. Here are a fair few, brutally summarized:

Voynich Review #1: Nature

“Cryptography: Calligraphic conundrum” by Andrew Robinson is well-informed and clear: but having written books on Champollion, Young and Ventris, and on Indus scripts (as well as a whole load of other lost languages), he’s on the right side of most of the debates. For him, the Voynich Manuscript is at heart a cryptographic mystery rather than a linguistic one.

“What hope is there of decoding the script? Not much at present, I fear”, Robinson glumly concludes, though it has to be said that his follow-on assertion that “Professional cryptographers have been rightly wary of the Voynich manuscript ever since the disastrous self-delusion of Newbold” isn’t quite on the mark – the real answer would be far less reductive and indeed far more complicated.

Incidentally, if you put ‘Voynich’ into the search field at the top right of the Nature website, it brings up a link to a 1928 article by Robert Steele (though behind a paywall), with the unpromising-sounding incipit “It is known that Bacon was interested in ciphers…” Who says that mainstream media don’t give the Voynich Manuscript proper coverage, eh?

Voynich Review #2: Star Tribune

“Review: ‘The Voynich Manuscript,’ edited by Raymond Clemens” by Peter Lewis starts with brio (“It is a fine morning in the Holy Roman Empire. The year: 1431”), before swiftly moving on to applaud the photo-facsimile’s accompanying essays as “absorbing squibs” (I always thought that was more of a satirical term, but perhaps he is using a short-burning firework metaphor here).

But after sustaining this for so long, he goes and spoils it somewhat:

But listen: An applied linguistician recently claimed to have deciphered the words “Taurus” and “centaury,” an herb. Also recently, the American Botanical Council published a paper suggesting one of its plant drawings intimates a Mexican connection. The Voynich likes nothing better than deepening its mystery.

*sigh* Oh, well. 😐

National Review: Bookmonger

This 13-minute podcast is a radio-style telephone interview with Ray Clemens. The Internet’s previous dearth of good images of Clemens is now somewhat assuaged by the picture of him the Bookmonger included:

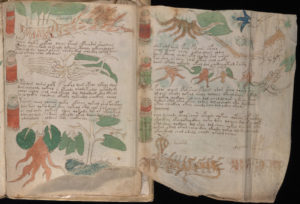

He calls the Voynich Manuscript’s illustrations “beautiful” (which is perhaps a bit of a stretch), and seems to be particularly taken with the Voynich nymphs. Clemens is very pleased with the foldout sections and the quality of the colours in Yale’s photo-facsimile. The Voynich Manuscript was “one of the first manuscripts [that the Beinecke] digitized”, and it “receives far more attention than any other book on the website […] and that’s for many different reasons”.

Solving it would be nice, he thinks: but he also believes “at this point that that’s a fairly quixotic goal […] the chances of this actually being cracked in that sense are pretty remote […] my personal feeling is that I think it will remain an enigma for quite some time”.

Voynich Review #3: The New Yorker

“The Unsolvable Mysteries of the Voynich Manuscript” by Josephine Livingstone appeared in the New Yorker a fortnight ago. For her, the hazy theories floating ethereally around the Voynich are the same kind of “speculative knowledge [that] flourishes in moments of uncertainty and fear”. She continues:

Humans are fond of weaving narratives like doilies around gaping holes, so that the holes won’t scare them. And objects from premodern history — like medieval manuscripts — are the perfect canvas on which to project our worries about the difficult and the frightening and the arcane, because these objects come from a time outside culture as we conceive of it.

Though Livingstone never quite says it directly, it seems reasonably clear to me that she sees study of the Voynich as being inevitably riddled with pseudohistory and pseudoscience, and that its blood brothers (and indeed sisters) are quasi-occult things such as conspiracy theories, astrology, alchemy, and tarot.

For her, the Voynich is unreadable period, and so thinks we should perhaps approach the photo-facsimile more as we might a Zen koan, as a way “to remember that there are ineluctable mysteries at the bottom of things whose meanings we will never know”.

Voynich Review #4: The Paris Review

In “The Pleasures of Incomprehensibility : Why we don’t need to decode ‘the world’s most mysterious book.’ “, Michael LaPointe takes our dissatisfaction with the Voynich Manuscript’s inscrutability as a sign of one of modernity’s shortcomings – that we moderns are somehow too restless to be truly comfortable with something that cannot be intellectually conquered and known.

Instead, he suggests we should look at it as if it were a work of art, one cloaked in the same incomprehensibility that the Dadaists celebrated. For as Tristan Tzara put it, “When a writer or artist is praised by the newspapers, it is proof of the intelligibility of his work: wretched lining of a coat for public use.” And so LaPointe concludes:

“At a time when even the most mysterious artist is subject to history and biography, it’s amazing to encounter a book that floats outside of all disciplines. The Voynich Manuscript exudes an aesthetic aura while squirming out of every category.”

In the end, though, LaPointe can’t help but be seduced by the suggestion of a hoax, a pre-modern postmodernist canard:

“It could very well have been composed as an elaborate lampoon of medieval knowledge, and it’s amusing to imagine that we’re still falling for the trick.”

Versopolis / Knight

Though the Versopolis website normally focuses on poems (errrm… the clue’s in the name), it has recently taken a step sideways into the Voynich world with two commissioned articles.

The first, by Kevin Knight, is a fairly straight-down-the-road factual review of Yale’s photo-facsimile, despite tarrying early on in full-on personal My-First-CopyFlo recollection mode:

My first copy of the Voynich was a black-and-white Christmas present from my father. It might have been a bootleg copy. He wrote “Good luck deciphering!” inside the front cover. I bit, and by the time I had paged through the low-quality scan, the hook was set.

Ultimately, even though Knight clearly has his own well-formed opinion about the Voynich Manuscript, on this particular occasion he chooses to toe the official Beinecke line, albeit with a friendly micro-dig at the photo-facsimile edition’s coffeetableitudinosity:

Perhaps one day, a person named X will uncover and assemble the right set of clues, and as happened with the Egyptian hieroglyphs and Mayan carvings, the answer to Voynich will suddenly fall into place. Meanwhile, with the help of Yale University Press and Amazon.com, the enigma is busy spreading itself to coffee tables, bedsides, and offices throughout the world, trying to find its X.

Versopolis / Zandbergen

The second Versopolis article is “1. The Making of a Mystery” by none other than Rene Zandbergen.

Rene lays out the known provenance of the Voynich Manuscript in a (once again) straight-down-the-road manner, though his assertion that “its historical value is probably small” is perhaps a little early. I’d also probably take Rene slightly to task for writing an article about the manuscript’s origins while bracketing out its first 200 years: but then again, given that this is the period I’m most interested in reconstructing, I would say that, wouldn’t I? :-p

Futility Closet

In Episode #129, the Futility Closet podcast presenters take on the mystery of the Voynich Manuscript (though note this is only in the first 18 minutes of the podcast, after which they move on to various lateral thinking puzzles).

By and large, they do a pretty good job of the subject, though never quite managing to break through the layer of unloveable Wikipediaesque lacquer that tends to coat most online accounts. Oh, and personally, I didn’t quite manage to buy into the presenters’ interaction schtick thing, so for me it wasn’t really anything more than a nice-sounding recital. But make of it all what you will, that’s how the Internet works.

In Summary

If you already know a tolerable amount about the Voynich Manuscript, you’ll probably be left fairly cold by pretty much all of the above: once you have your copy of the Yale photo-facsimile, there’s really little more to be said.

And that, of course, is the key to the problem: that there is a heavy-hearted resignation to the coverage when viewed as a whole – a kind of glum nihilism that denies the Voynich Manuscript’s tricksy magic and curious interest. It is as if by asking people to buy their own copy, the Beinecke has brought it to their eyes in the context of its being an oddly undesirable artefact – that the paradox is now not about trying to read the unreadable, but about buying the unwantable.

For the Voynich Manuscript is, for all of Wilfrid Voynich’s hyperbolic antiquarian fluffery and Yale Universty Press’s best social media outreach / promotional efforts, still just as much an ‘ugly duckling’ as it was a century ago. While it is (and probably will continue to be) many things to many people, it is, just as Rene Zandbergen’s article (correctly) says, not beautiful. Even James Blunt couldn’t make it so, not even “an angel with a smile on her face” (errrm, and waist-deep in blue-daubed pipework).

What, when the spell rubs off, will non-Voynicheers actually think about the copy of the photo-facsimile their earnest cousin gave them for Christmas? I don’t know: we researchers all still have a mountain to climb before we reach the foothills of the real mountain, and I have no idea yet whether the photo-facsimile will be part of the solution or just another part of the problem. It’s pretty, though. 🙂